Abstract

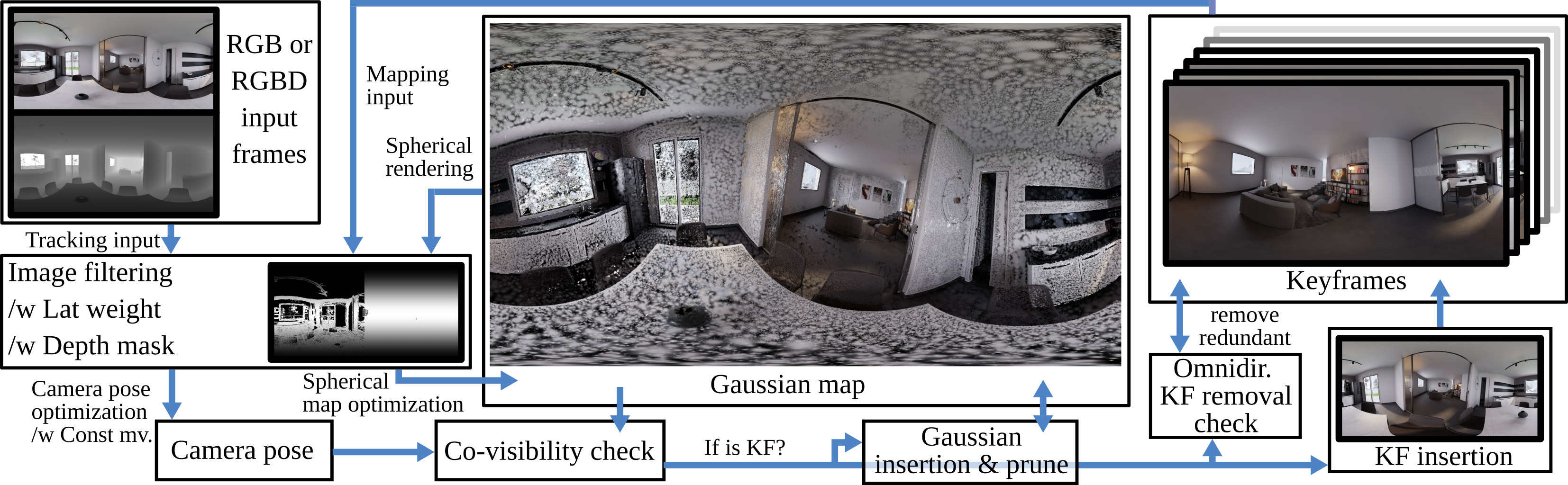

This work presents ODGS-SLAM, an omnidirectional simultaneous localization and mapping (SLAM) system utilizing 3D Gaussian Splatting (3DGS) as the unified representation for tracking and mapping. Thus, it reconstructs scene geometry from panoramic image sequences (RGB or RGBD) via splats while also estimating the camera poses. Such a framework is important to understand the full surrounding, e.g., for augmented reality applications or autonomous systems. We extended existing 3DGS-SLAM methods to handle omnidirectional input by including closed-form gradients for mapping and camera pose estimation, utilizing an equirectangular projection model. To reduce memory footprint, a key frame removal procedure based on graph analysis is proposed, enabling the application to handle larger input sizes. For evaluation, we provide a dataset of controlled real-world and synthetic test scenes (indoor and outdoor), employing a custom developed virtual camera lens. An extensive evaluation shows that, for camera tracking, the proposed method achieves statistically significant lower ATE RMSE scores compared to a recent omnidirectional SLAM system, as well as other 3DGS-SLAM frameworks, while reaching a similar mapping performance.

Dataset

The ODGS-SLAM dataset is a collection of controlled real-world and synthetic test sequences for omnidirectional, multi-camera, fisheye, and perspective camera setups, together with ground-truth trajectories. It serves as a benchmark for comparing different visual SLAM systems using different input image modalities.

Citation and Attribution

ODGS-SLAM: Omnidirectional Gaussian Splatting SLAM has been accepted at CVPR 2026 and will be published in the conference proceedings. If you use this work or dataset, please cite the paper. Once the official reference is available, the citation details will be provided here.